A Teaching highlight

This is the story (narrator: Herbert Jaeger) of how a machine learning seminar in the year 2007 engaged in an international financial forcasting competition. None of the participants nor the prof had any previous exposure to financial forecasting, and the deadline was only 2.5 months ahead when we started. A mad race. The story is worth telling in a little more detail, since it illustrates how a solid machine learning project should be carried out, and it illustrates the Jacobs spirit. And yes, against twenty-four international competitors, we won.

Background

Forecasting financial time series (like stock indices, currency exchange rates, gross national products) is a task of obvious great interest. This task has been investigated over decades in economics, and numerous specialized financial forecasting methods have been developed. There are dedicated Journals, textbooks, and professional societies.

Independent from this specialized application domain, machine learning research had developed, over the years, a whole spectrum of general-purpose methods for predicting timeseries. Today, neural network methods are the most widely used among these methods.

Neural network methods (and other general-purpose techniques) had been applied to financial forecasting before the time when the competition took place. But when put to the test, it had been consistently found that general-purpose methods came out inferior to specialized financial forecasting methods. Competing methods were compared in large-scale time series prediction competitions, the most comprehensive (at the time of our competition) being the “M3” competition conducted in 1999.

The state-of-the-art in neural networks prediction methods had however advanced in the interval between that 1999 competition and the year 2007. The main purpose of the “NN3” competition, in which the Jacobs group participated, was to compare anew neural networks (and other general purpose) methods with specialized financial forecasting methods.

The competition

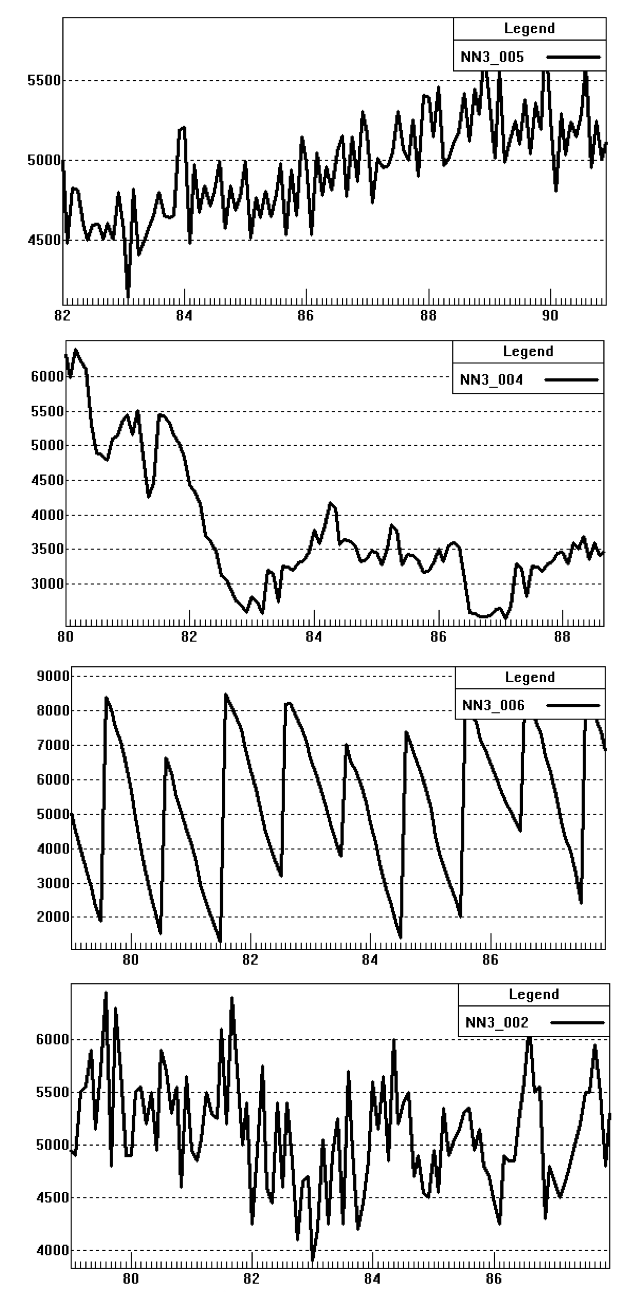

The organizers put online a set of 111 financial timeseries. The only information given about these data was that the timeseries were monthly measurements of a variety of micro- and macroeconomical indicators from a variety of sources. To give an impression, the graphics (left) shows some of the given series.

The task was to predict these time series by 18 months (= compute 18 additional data points per time series). A particular (intentional) difficulty of this task arose from the circumstance that the time series came from a wide diversity of sources and thus reflected a likewise large variety of financial/market mechanisms. Thus, one of the main theoretical and technical challenges was that the prediction methods had to be very robust, and versatile.

Because many prediction methods (both specialized and general-purpose) are labor- and computer-intensive, predicting 111 series poses a real resource- and workload hurdle. Therefore, there also was an option to participate on a subset of only 11 of the given timeseries.

The competition was organized by a team from the Lancaster University Management School, sponsored (among others) by grants from the financial consulting company SAS and the International Institute of Forecasters. It was announced in Fall 2006, the deadline for submitting predictions was May 14, 2007. For the full 111 dataset there were 25, and for the reduced 11 dataset there were 44 submissions. The results were announced by the end of October 2007 (http://www.neural-forecasting-competition.com/NN3/results.htm ).

The seminar

In the Smart Systems graduate program in Computer Science at that time, our master students had to take three research seminars. Since the competition deadline coincided perfectly with the end of the Spring semester at Jacobs, I found that participating in this competition was a great opportunity for a treat of hands-on education in machine learning. So in Spring 2007, I announced that the topic of my research seminar would be financial time series prediction and participation in this competition.

Five master students registered for this seminar:

- Iulian Illies (mathematics)

- Olegas Kosuchinas (Smart Systems)

- Monserrat Rincon (mathematics)

- Vytenis Sakenas (Smart Systems)

- Narunas Vaskevicius (Smart Systems)

Neither these modeling warriors, nor I, had any previous experience with financial forecasting. Thus we structured the little time that we had as follows:

Month 1

- studying the basic principles of financial datasets and specialized forecasting methods (= we just all read the standard textbook of that field)

- implementing two of the “traditional” forecasting methods

- preliminary analysis of the 111 datasets (extracting trends, cyclic and random components, general aim: getting a feeling for the various mechanisms and features of these time series)

Month 2

- for students: implementation of 5 textbook methods for predicting time series, – these were general-purpose methods from various fields but not specialized financial forecasting methods (A. neural networks, B. support vector machines, C. wavelets, D. linear IIF filters, E. local nonparametric modelling)

- for myself: adaptation of the general-purpose prediction method that I developed over the last years (Echo State Networks, a special type of neural networks)

Month 3

- comparative assessment of the methods implemented so far (2 traditional financial, 5 generic textbook, 1 “home-made”)

- design of a “best-possible” prediction method, based on the experiences made so far. This turned out to be a combination of some steps of “traditional” financial forecasting methods for data preprocessing, with Echo State Networks for computing the predictions

- actually computing the predictions, writing a report paper (which was a requisite of the competition), and submitting

Of course, we finished only just in time, that is, shortly before the midnight deadline on a Sunday night, after a feverish weekend spent all together in my office. There seems no other way to do stuff at Jacobs…

Because we implemented and tested a variety of “standard” methods during our project, we had a fairly clear impression that the method which we ultimately designed would be highly competitive – in fact, we were quite confident that we would score high in the competition. But we also knew that at the same time, other highly qualified groups around the world would feel the same…

Our approach is presented in some technical detail in our competition report: I. Ilies, H. Jaeger, O. Kosuchinas, M. Rincon, V. Šakėnas, N. Vaškevičius (2007): Stepping forward through echoes of the past: forecasting with Echo State Networks. Report on our winning entry in the 2007 financial time series competition

What die we learn in this seminar? Besides the technical knowledge that was acquired (basics of financial forecasting, overview of other standard prediction methods, basics of Echo State Network theory), there were two other yields from this seminar:

- first-hand experience in the organisation and daily administering of a complex joint project under a crushing deadline pressure, and

- a truly hands-on experience of what “machine learning” really means, beyond all theory: namely, that the ultimate quality of the models hinges crucially on the efforts of the human-in-the-loop, on the “feeling” that one develops for a particular type of data.

Smart Systems seminars were usually graded, with every student receiving a grade for his/her specific performance. We felt that this would not do justice to the great joint effort and always fully shared work that characterized this seminar. Therefore, we asked the Dean for permission to register this course as an ungraded course. Consequentially, each participant received just a “pass” note in the transcript, and to compensate, a detailed, personal recommendation letter for private future use.

Significance of winning the competition

Winning this competition did not imply that our method was the best possible at that time. Inspecting the competition result page, one will find at the top of the scoring table the following entries, which needs an explanation:

The SMAPE column gives the average relative prediction error. Our entry has a SMAPE of 15.18%. There are two other entries which have better SMAPEs of 14.84% and 14.89%. These refer to predictions that were made with methods rooting in the financial forecasting tradition, and which were used in this competition as points of reference. These specialized methods were not ranked in this competition, because it expressedly targetted general-purpose methods. One finds, in summary, that our method is placed close to the forefront of a group of top-performing specialized methods.

The significance of this outcome was that for the first time, a generic neural network method turned out to be competitive with specialized financial forecasting methods. Given that the two superior contributions were entered by renown financial modelling institutions / groups with long-standing experience, and ours just sprang from a short educational adventure (though in true Jacobs University spirit), the performance level that we reached becomes noteworthy. We saw here an indication that neural networks were finally rising to the original hopes in the field, namely, that learning from biological systems is the ultimately best way of realizing artificial intelligence.

A final note. Today, in the age of deep learning, it seems an established fact that neural networks would win everything. However, I want to point out that currently hot deep learning methods would not necessarily perform well with this competition task. The reason: deep learning methods excel when one has very large amounts of training data. This competition however came with a data set which, by deep learning standards, is extremely small and moreover, very heterogeneous. In such scenarios, the key to success is really not which modeling method one chooses but how skilfully one regularizes the method that one picked. Want to learn more about the magic of regularization? Then register for my machine learning course.